Most of us spent the last 2 years learning LOTS about online teaching.

Many of us relied on our instincts, advice from tech-savvy colleagues, and baling wire.

Some turned to helpful books. (Both Doug Lemov and Courtney Ostaff offer lots of practical wisdom.)

But: do we have any RESEARCH that can point the way?

Yes, reader, we do…

Everything Starts with Working Memory

This blog often focuses on working memory: a cognitive capacity that allows new information to combine with a student’s current knowledge.

That is: working memory lets learning happen.

Many scholars these days use Cognitive Load Theory to organize and describe the intersection of working memory and teaching.

In my view, cognitive load theory has both advantages and disadvantages.

First, it’s true (well, as “true” as any scientific theory can be).

Second, it’s a GREAT way for researchers to talk with other researchers about working memory.

But — here’s the disadvantage — it’s rather complex and jargony as a way for teachers to talk with other teachers. (Go ahead, ask me about “element interactivity.”)

How can teachers get the advantages and avoid the disadvantages?

One recent solution: Oliver Lovell’s splendid book — which explains cognitive load theory in ways that make classroom sense to teachers.

Another solution, especially helpful for online teaching: a recent review article by Stoo Sepp and others.

“Shifting Online: 12 Tips for Online Teaching” takes the jargon of cognitive load theory and makes it practical and specific for teachers — especially when we need to use these ideas for online teaching.

Examples, Please

Because Team Sepp offers 12 tips, I probably shouldn’t review them all here. (Doing so would, ironically, overwhelm readers’ working memory.)

Instead, let me offer an example or two.

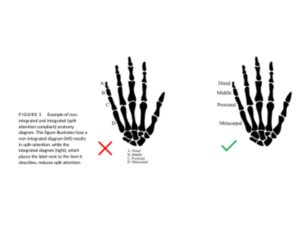

Cognitive load theory (rightly) focuses on the dangers of the split attention effect, b

Sepp translates that phrase into straightforward advice, as you can see in this diagram:

The version on the right integrates the descriptive words into the diagram: well done.

The version on the left, however, places the descriptive words below — readers must switch their focus back-n-forth to understand the ideas. In other words, the left version splits the reader’s attention. Boo.

Team Sepp’s straightforward advice: when teaching online, be sure that diagrams and videos embed descriptive words in the images (as clearly as possible).

Managing Nuances

This insight about split attention might seem to answer an enduring question for online instruction: should the teacher be visible?

That is: if I’ve created slides to map out the differences between comedy and tragedy, should my students be able to see me while they look at those slides?

At first glance, research into split attention suggests a clear “no.” If students look at my slides AND at me, well, they’re splitting their attention.

However, when this question gets researched directly, we find an interesting answer: the instructor’s presence does not directly reduce (or directly increase) students’ learning.

In other words: video of the teacher doesn’t create the split attention effect.

Sepp and colleagues combine that finding with this sensible insight:

“A visible instructor provides learners with important social cues, which help them feel connected to and be aware of other people in online settings.”

Researchers call this “social presence,” and it seems to have positive effects of its own. That is: students participate and learn more when they experience “social presence.”

As is always true, we can’t boil cognitive load theory down to “best practices.” (“No split attention ever!”)

Instead, we have to take situations and subtleties into account. (“Avoid split attention; but don’t worry that our presence creates split attention.”)

Team Sepp balance these complexities clearly and well.

Final Thoughts

This blog post introduces Sepp’s review, but it doesn’t summarize that review. To prepare for the possibility we might be back to online learning at some point, you might take some time to read it yourself.

Its greatest benefits will come when individual teachers consider how these abstract concepts from cognitive load theory apply to the specifics of our curriculum, our students, and our own teaching work.

Sepp’s review article helps with exactly that translational work.

Sepp, S., Wong, M., Hoogerheide, V., & Castro‐Alonso, J. C. (2021). Shifting online: 12 tips for online teaching derived from contemporary educational psychology research. Journal of Computer Assisted Learning.

About Andrew Watson

About Andrew Watson

![The Bruce Willis Method: Catching Up Post-Covid [Reposted]](https://www.learningandthebrain.com/blog/wp-content/uploads/2022/06/Boy-at-Track-Start.jpg)

![Do Classroom Decorations Distract Students? A Story in 4 Parts… [Reposted]](https://www.learningandthebrain.com/blog/wp-content/uploads/2022/03/Busy-Classroom.jpg)

![Is “Cell Phone Addiction” Really a Thing? [Reposted]](https://www.learningandthebrain.com/blog/wp-content/uploads/2021/11/Black-College-Student-Holding-Phone.jpg)