Imagine that you work at a school where these students consistently struggle compared to those students.

As teachers and school leaders, you’d like to help these students do better than they currently do; maybe do as well as those students. (Lower down in the post, I’ll say more about the two groups of students.)

What can you do?

Values Affirmation

One simple strategy has gotten a fair amount of research in recent years.

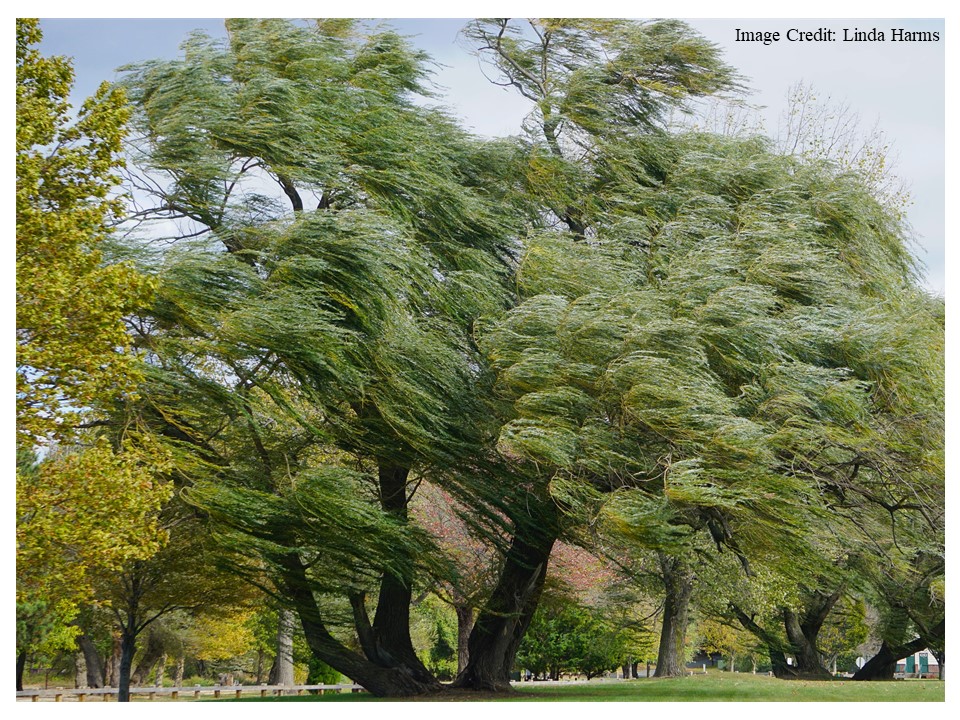

The idea goes something like this. If I am, say, a 12-year-old student, I might not really see how my school life fits with the rest of my life. They seem like two different world.

If teachers could connect those “two different worlds” — even a little bit — students would feel more comfortable, less stressed, better invested. Heck, they might even learn more.

To accomplish this mission, researchers provide students a list of values: loyalty, faith, friendship, hard work, justice, happiness, family, and so forth.

They then have students undertake a brief writing assignment. The instructions go something like this:

Choose one or more of these values that are most important for you personally. Write about why they are important. You won’t be graded on spelling or grammar; focus on explaining your ideas, values, and beliefs clearly.

The control group gets the same instructions, except that students choose values “that are the least important for you personally, but might be important to someone else.”

This strategy gets the somewhat lumpy name “values affirmation” — because it gives students a chance to affirm the values they hold.

This approach has been used in the United States in several studies, and has had some success. (See, for instance, here).

Across the Atlantic

But: would this strategy work elsewhere? What about, say, England?

Back in 2019, a group of researchers tried this approach with different groups of underperforming students. (Again, more on this topic below.)

They had two groups of 11-14-year-old students (the underperformers, the typical performers) do three “values affirmation” writing sessions: one in September, one in January, and one in April.

Of course, one half of both groups did the “values affirmation” version of the writing exercises; the other half did the control writing assignment.

What results did they find?

As was true in the US, the values affirmation writings had no effect on the typical performers.

However, it had a dramatic effect on the underperformers. Their math grades were higher at the end of the year (compared to the group that did the control writing). And their stress levels were considerably lower.

Because of the statistical method that the researchers used, I can’t say that values affirmation translated a B average into an A average. I can say that it had an effect size of 0.35 standard deviations — which is certainly noteworthy, especially to people who read research studies in this field.

In brief: this strategy costs literally zero dollars. It takes one hour over the course of a school year. And it helps underperforming students.

SO MUCH TO LOVE.

The Story Behind the Story

Up to this point, I’ve described what the researchers did. But I haven’t explained the psychological theory behind their strategy — because the theory prompts some controversy.

Now that we’ve looked at the strategy, let’s get to that theory.

Back in 1995, Claude Steele proposed a theory that has come to be called “Stereotype Threat.” It proposes a complex and counter-intuitive hypothesis.

To describe the theory, let me make up a non-existent stereotype: “blue-eyed people are bad at grammar.” (For the record, I’m regularly complimented on my blue eyes, and I teach a lot of grammar.)

Why might a blue-eyed student struggle on a grammar test?

Steele’s research suggests a surprising internal process. When my blue eyes and I sit down to take the grammar test, I know the material well. However, I also know that stereotypes suggest I’ll do badly.

What happens? I do NOT (as many suspect) give in and let the stereotype become a self-fulfilling prophecy. Instead, I decide to fight back. And, in a terrible paradox, my determination to disprove the stereotype leads to all sorts of counter-productive academic behaviors.

For instance: I might spend lots of time working on a very easy problem to prove that I know this grammar. Alas, I took so long on the easy problems that I don’t have time for the harder ones.

In this unexpected way, Steele argues, stereotypes harm students’ learning.

Enter the Controversy

Over the years, many researchers explored Stereotype Threat. They found that stereotypes about almost anything (race, ethnicity, gender, sexuality, academic major) can affect performance on almost anything (math tests, sports performance, leadership aspirations).

Steele’s book Whistling Vivaldi is, in fact, an unusually easy-to-read book about a complex psychological phenomenon. Many Learning and the Brain speakers (Joshua Aronson, Sian Beilock) have studied and written about ST.

At the same time, other scholars have doubted this entire research field. They point to various statistical and procedural concerns to suggest that, well, there’s no real there there. (If you’re interested in the push back, you can read more here and here.)

Putting It All Together

In the English study I’ve been describing, the relevant stereotype is “people from relatively lower socio-economic status just aren’t as smart as others.” According to the study’s authors, this stereotype is the predominant academic stereotype in England, whereas US stereotypes focus more on race, ethnicity, and gender.

So, in their study, the authors explored the effect of Values Affirmation on students who did (and did not) receive free school lunches: a common proxy for socio-economic status.

Sure enough, Values Affirmation had no effect one way or the other on students who did not receive free lunches. Because they faced fewer stereotypes about their academic performance, they didn’t suffer the harm that ST might cause.

But, for the students who DID receive free lunches, that same writing exercise helped a lot. This strategy made them feel more like they belonged, so they presumably didn’t need to work as hard to disprove stereotypes.

For that reason, as described above, one hour’s worth of writing reduced stressed and increased grades.

TL;DR

This research suggests that a Values Affirmation writing assignment (it’s free!) can help some underperforming students learn more and feel less stress.

And, it also strengthens the case that Stereotype Threat might — despite the concerns about methodology — really be a thing.

Even if that second statement turns out not to be true, the first one is worth highlighting.

Want a simple, low-cost way to help struggling students? We’ve got one…

Hadden, I. R., Easterbrook, M. J., Nieuwenhuis, M., Fox, K. J., & Dolan, P. (2020). Self‐affirmation reduces the socioeconomic attainment gap in schools in England. British Journal of Educational Psychology, 90(2), 517-536.

Sherman, D. K., Hartson, K. A., Binning, K. R., Purdie-Vaughns, V., Garcia, J., Taborsky-Barba, S., … & Cohen, G. L. (2013). Deflecting the trajectory and changing the narrative: how self-affirmation affects academic performance and motivation under identity threat. Journal of Personality and Social Psychology, 104(4), 591.

About Andrew Watson

About Andrew Watson